A Complete Introduction to GPU Programming With Practical Examples in CUDA and Python - Cherry Servers

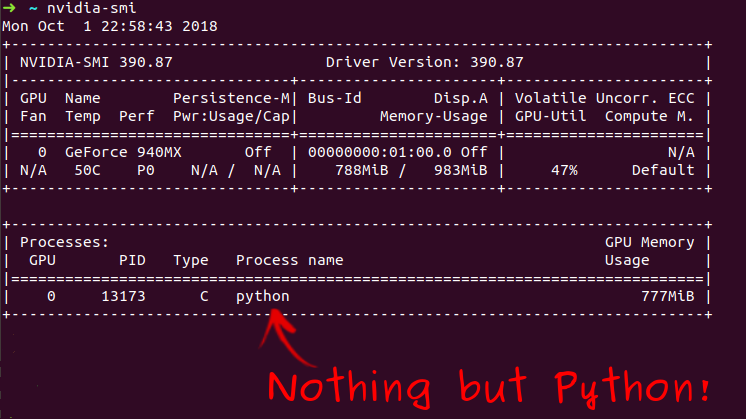

How to dedicate your laptop GPU to TensorFlow only, on Ubuntu 18.04. | by Manu NALEPA | Towards Data Science

Ki-Hwan Kim - GPU Acceleration of a Global Atmospheric Model using Python based Multi-platform - YouTube

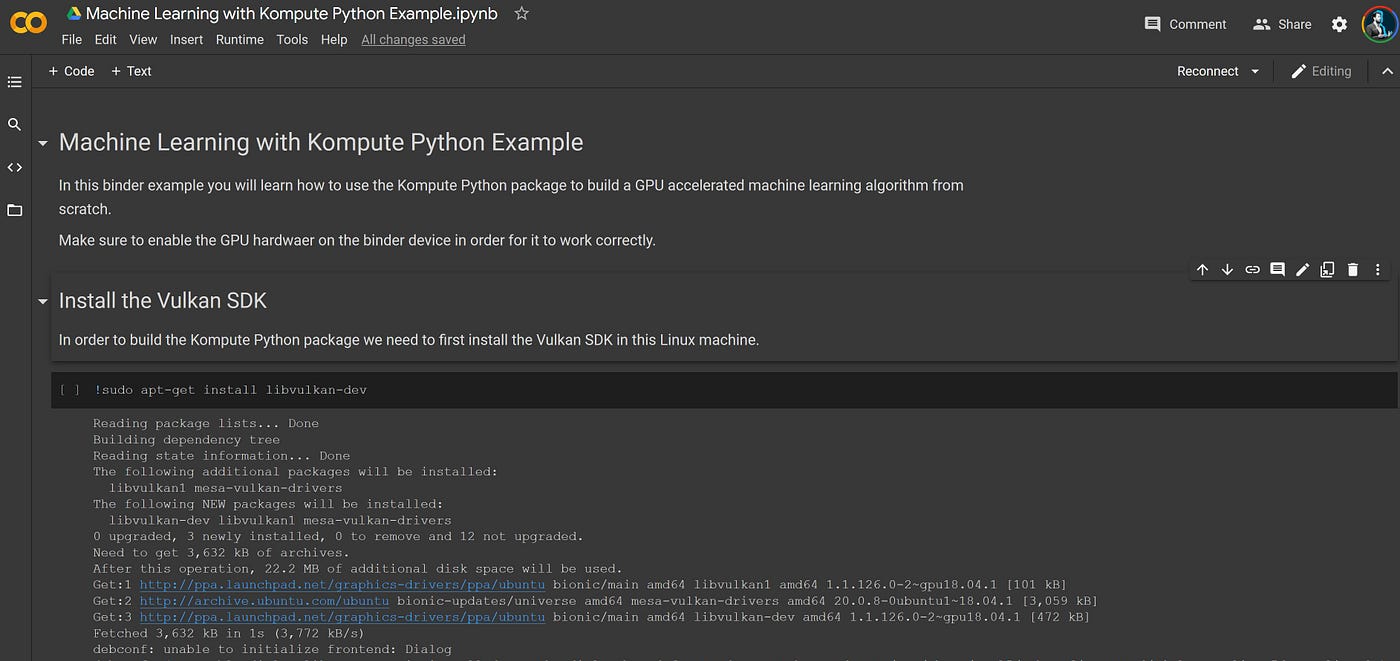

Beyond CUDA: GPU Accelerated Python on Cross-Vendor Graphics Cards with Vulkan Kompute - TIB AV-Portal

Amazon.com: Hands-On GPU Computing with Python: Explore the capabilities of GPUs for solving high performance computational problems: 9781789341072: Bandyopadhyay, Avimanyu: Books

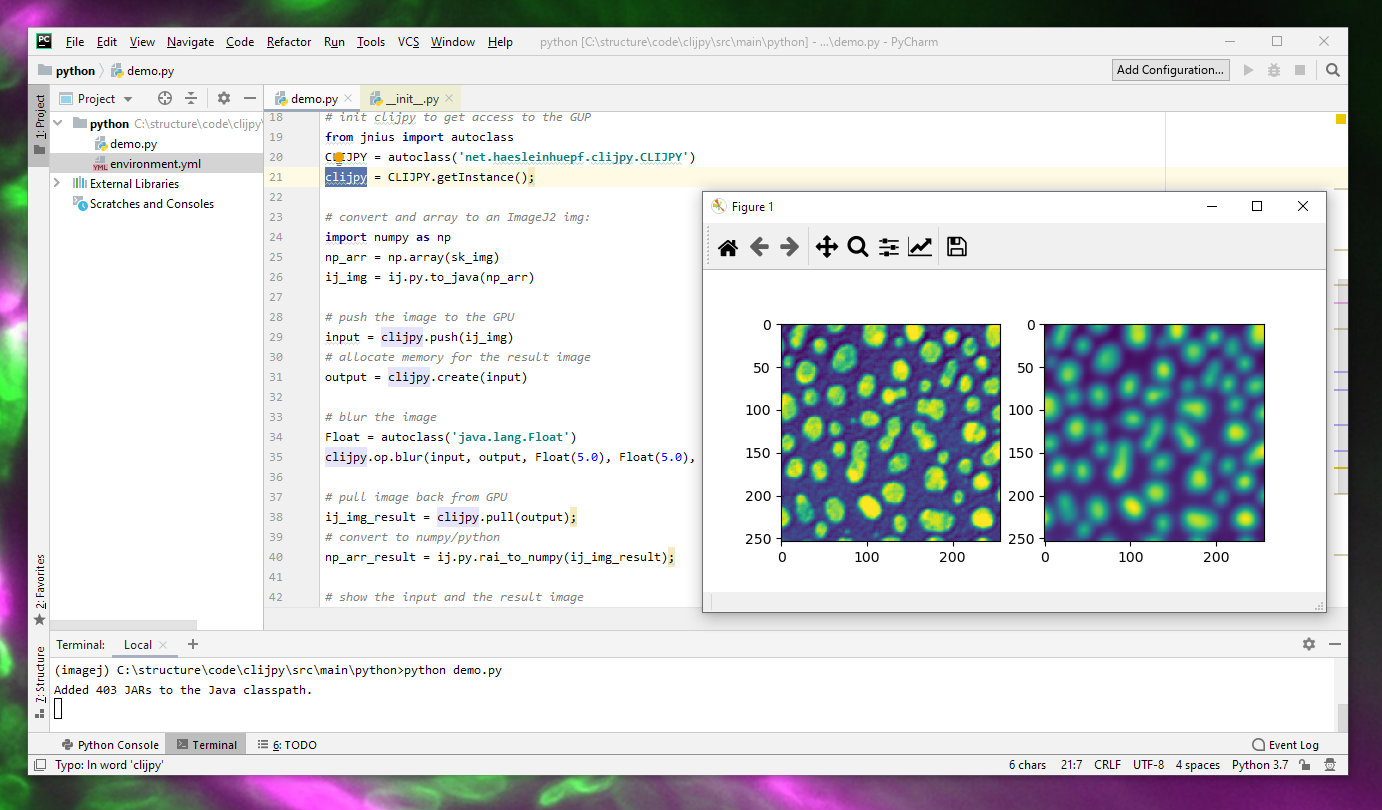

Beyond CUDA: GPU Accelerated Python for Machine Learning on Cross-Vendor Graphics Cards Made Simple | by Alejandro Saucedo | Towards Data Science

Practical GPU Graphics with wgpu-py and Python: Creating Advanced Graphics on Native Devices and the Web Using wgpu-py: the Next-Generation GPU API for Python: Xu, Jack: 9798832139647: Amazon.com: Books

Hands-On GPU Programming with Python and CUDA: Explore high-performance parallel computing with CUDA: 9781788993913: Computer Science Books @ Amazon.com

Beyond CUDA: GPU Accelerated Python for Machine Learning on Cross-Vendor Graphics Cards Made Simple | by Alejandro Saucedo | Towards Data Science

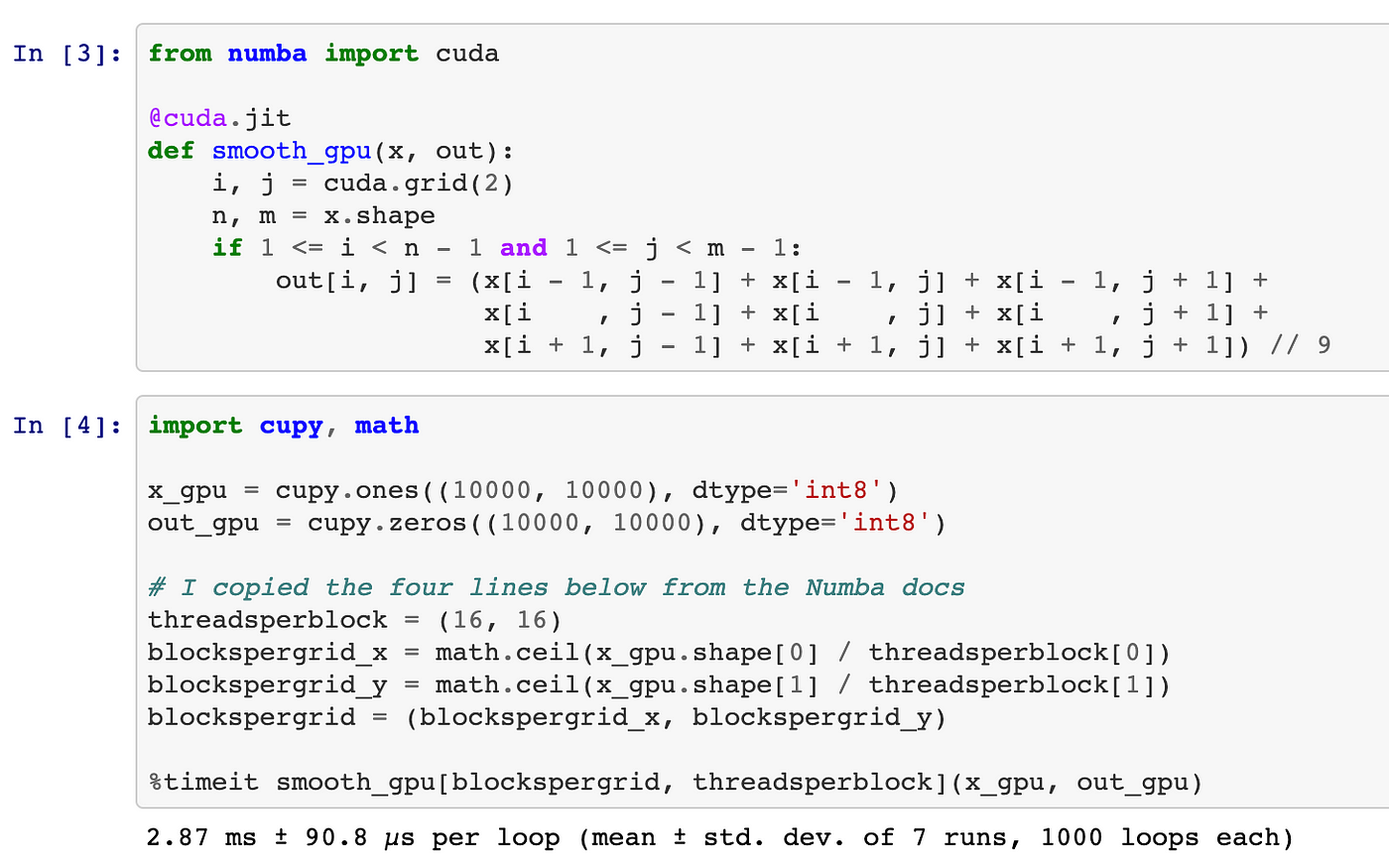

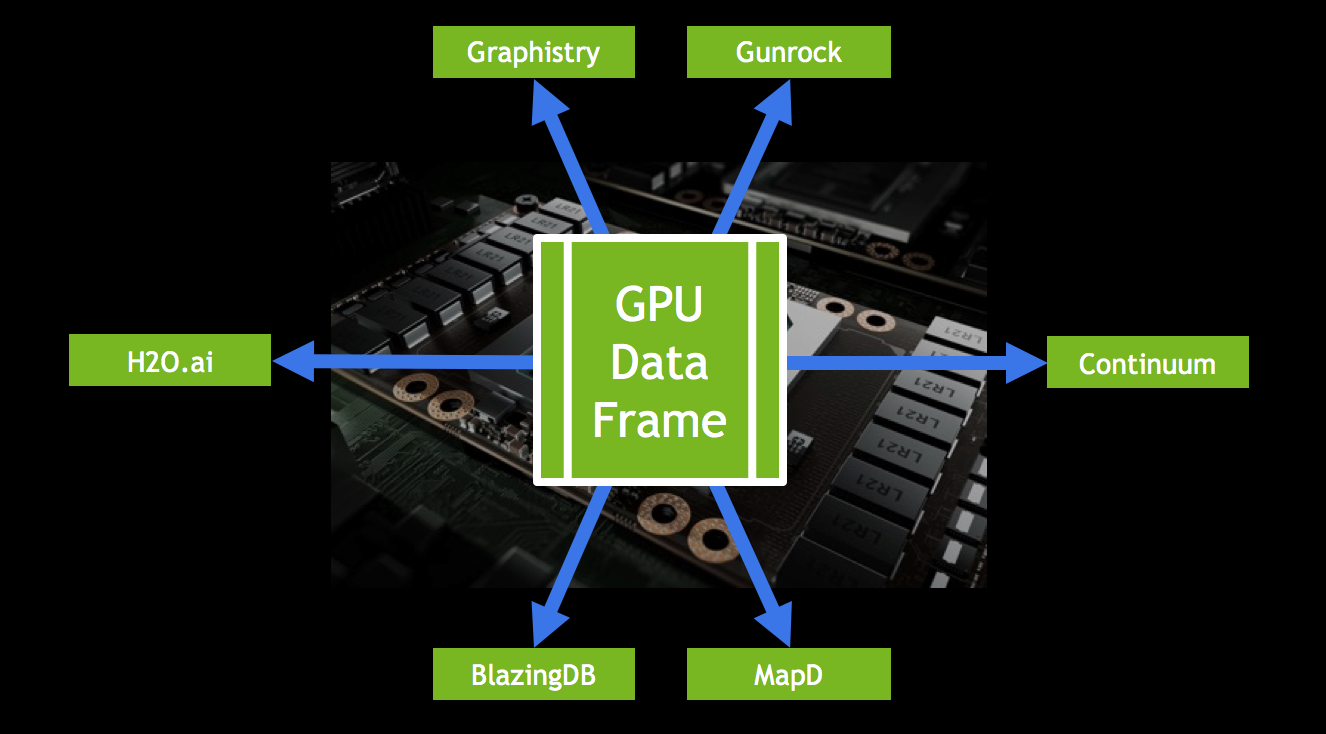

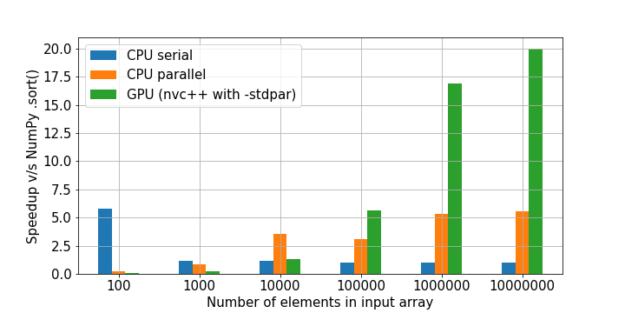

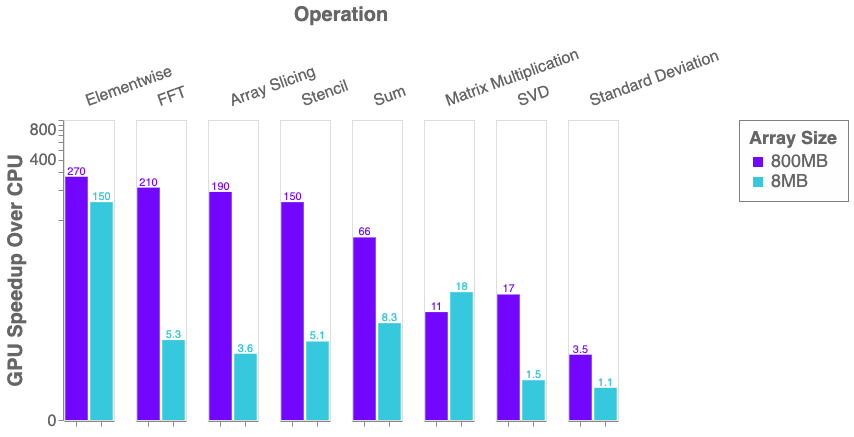

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

GitHub - meghshukla/CUDA-Python-GPU-Acceleration-MaximumLikelihood-RelaxationLabelling: GUI implementation with CUDA kernels and Numba to facilitate parallel execution of Maximum Likelihood and Relaxation Labelling algorithms in Python 3

Beyond CUDA: GPU Accelerated Python for Machine Learning on Cross-Vendor Graphics Cards Made Simple | by Alejandro Saucedo | Towards Data Science